Featured Project

FeaturedDataWarehouseInsuranceETLSQLDataEngineering

NFIP Insurance Data Warehouse

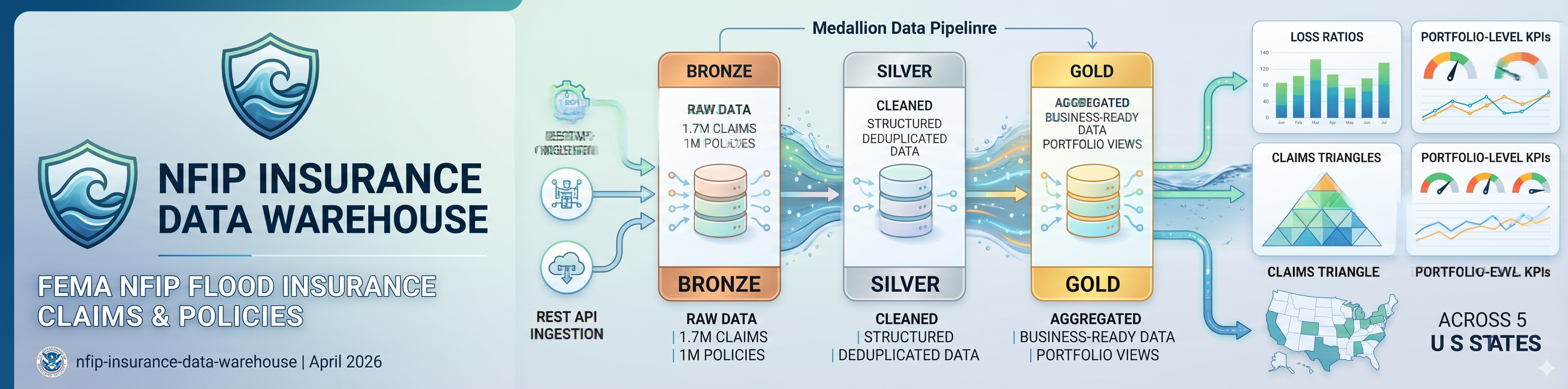

End-to-end insurance data warehouse built on 1.7M real flood insurance claims and 1M policies from FEMA's NFIP, ingested via REST API. Medallion Architecture pipeline producing loss ratios, claims triangles, and portfolio-level KPIs across five US states.

Domain

General Insurance · Flood Insurance · Data Engineering

Data Sources

OpenFEMA API · NFIP REdacted Claims v2 · NFIP Redacted Policies v2 · FEMA

Stack

Python · T-SQL · SQL Server · Azure SQL Edge · Docker · pandas · pyodbc

Published

April 2026

General insurers manage risk across two core data domains — the policies they underwrite and the claims those policies generate. These datasets originate from separate operational systems and must be joined, reconciled, and structured before any analytics can occur. This project builds that foundational data infrastructure using real flood insurance data at genuine scale.

The Data

The warehouse ingests real claims and policy data from FEMA's National Flood Insurance Program via the OpenFEMA REST API — no static CSV download. A Python ingestion pipeline makes paginated API calls filtered to five high-risk US states (Florida, Louisiana, Texas, New Jersey, New York), handling rate limiting, retry logic, column selection, and resume support across sessions. Result: 1,703,977 claims transactions and 1,000,000 policy records loaded into a three-layer Medallion pipeline.

The Architecture

Bronze stores raw API data as-is in VARCHAR columns with full audit metadata — batch ID, ingestion timestamp, source endpoint. The dynamic table creation approach means the schema adapts to actual API column names rather than assumed ones, which proved essential when the OpenFEMA API returned field names that differed from documentation.

Silver applies data type casting, NULL handling, deduplication, flood zone normalisation (mapping raw NFIP zone codes to A/V/X/D categories), FIPS state resolution, and policy exposure derivation. Of the 1,703,977 Bronze claims, 1,328,816 survive to Silver after deduplication and NULL date filtering — a documented 22% drop.

Gold implements a star schema with two fact tables and five conformed dimensions — 1,329,004 claim facts and 823,465 policy facts, joined to a 19,358-row date dimension spanning the full NFIP history from 1978 to 2030, 469 location entries across five states, and 68 distinct flood zone codes normalised to five categories.

Key Findings from the Analytics Layers

Large loss concentration — The P95 loss threshold across the portfolio is $185,607 per claim. Florida leads with 22,119 catastrophic claims totalling $7.54B — 41.8% of its entire total paid comes from just the top 5% of losses. Texas shows 18,242 large losses at $4.9B (31.3% concentration). This tail-driven loss pattern is characteristic of flood insurance and has direct implications for catastrophe reinsurance pricing.

Claims development by accident year — The data spans the full history of US flood insurance. 2005 (Katrina) generated 131,431 claims and $10.1B in total paid — the largest single accident year in the dataset. 2017 (Harvey) produced 97,064 claims at an average severity of $90,735 per claim. 2012 (Sandy) generated 138,117 claims at $60,596 average. Average severity has risen significantly over time, reflecting both increased property values and more severe flood events.

Severity by flood zone — Zone V (coastal high-risk with wave action) averages $50,119 per claim with 88.5% of losses concentrated in building damage rather than contents — consistent with storm surge destroying structures while leaving contents losses relatively lower. Zone A (standard high-risk, 858,904 claims) averages $52,836. Zone X (moderate-to-low risk) averages $43,017 — a meaningful 19% reduction from high-risk zones, validating the SFHA classification system as a pricing signal.

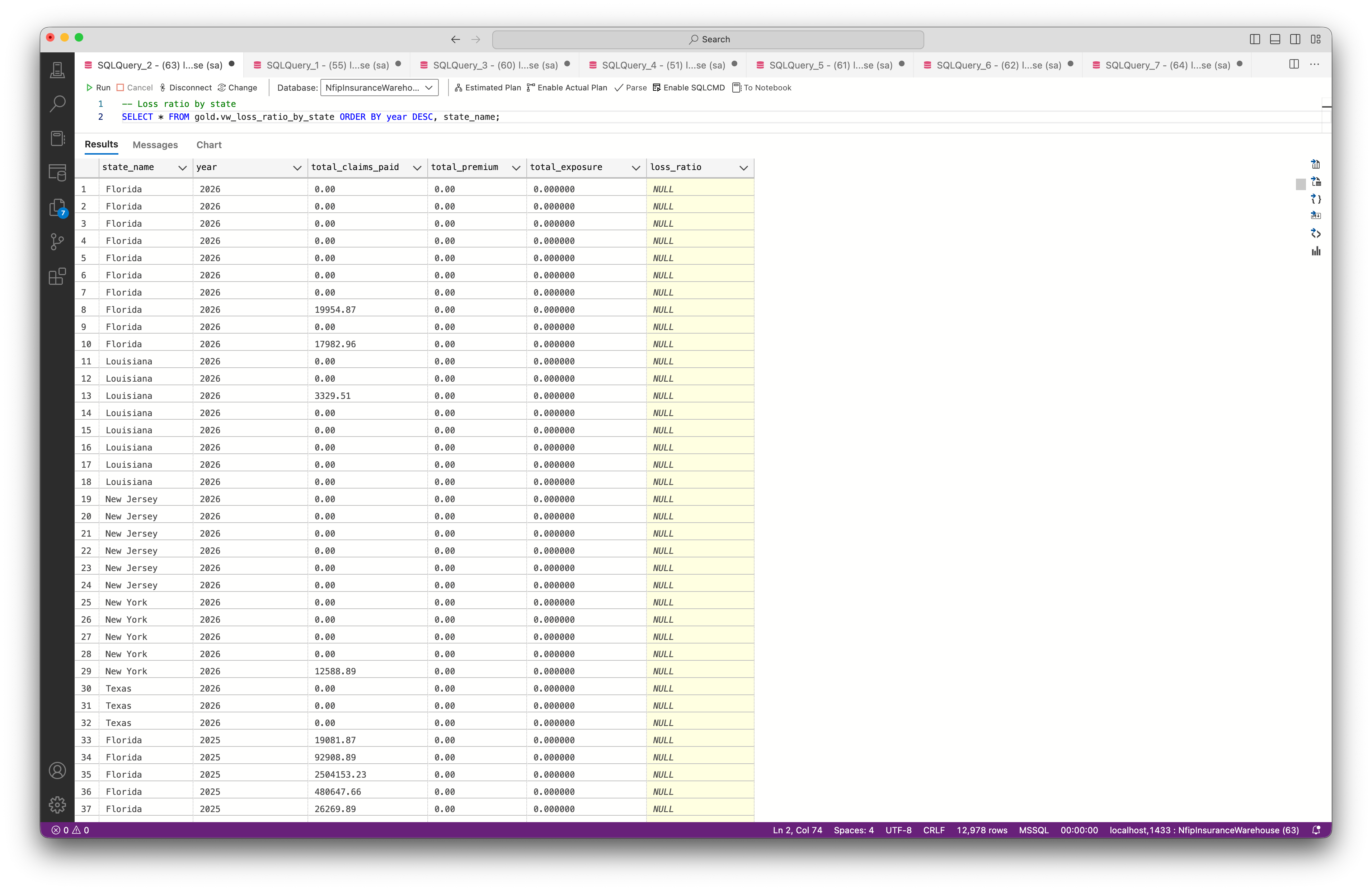

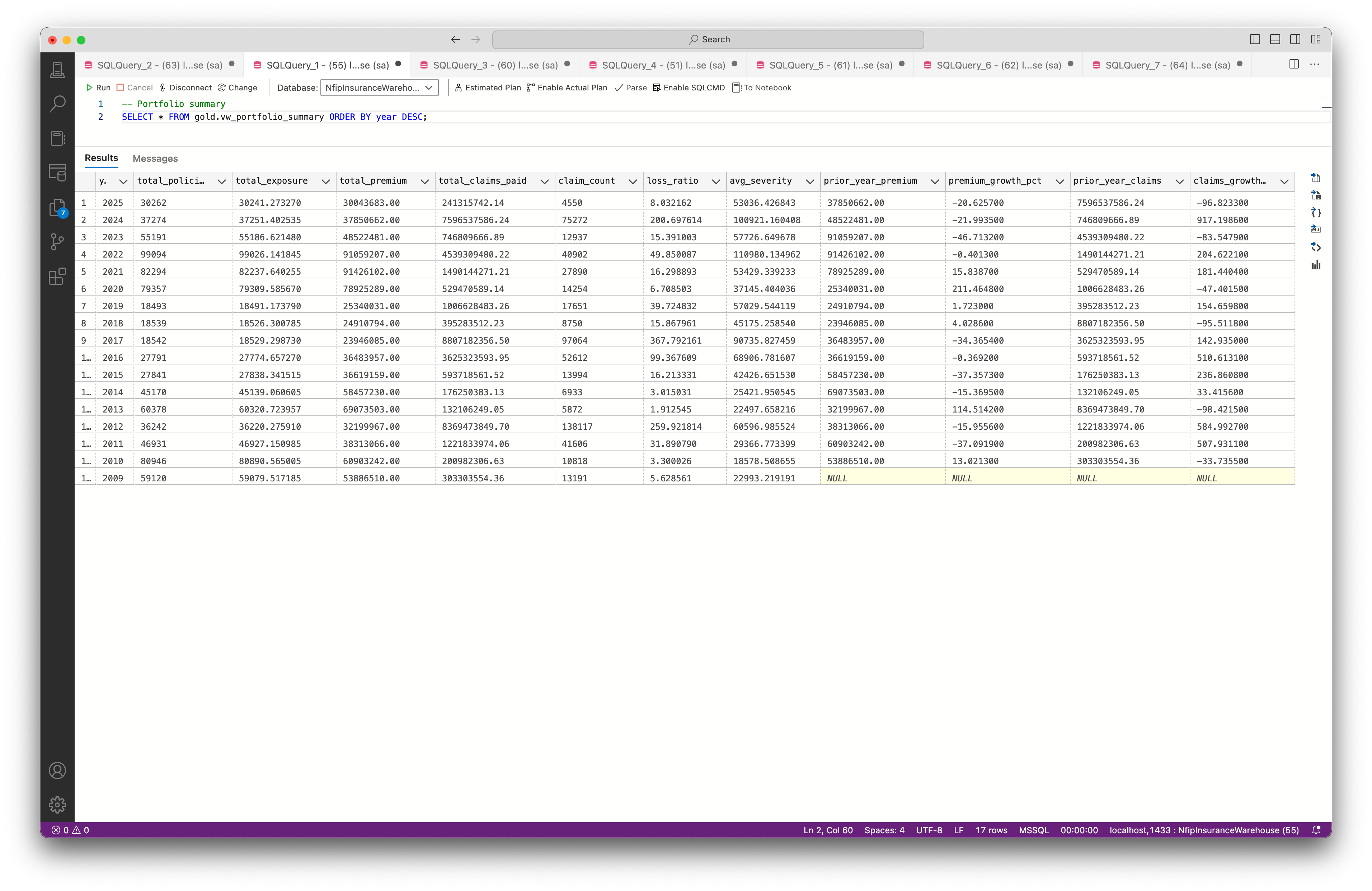

Portfolio trends — The portfolio summary shows year-over-year premium and claims trends from 2009 to 2025. 2022 stands out with a loss ratio of 49.85x driven by $4.54B in claims against a limited policy sample — reflecting the known underinsurance problem in high-flood-risk areas, particularly in Louisiana and coastal Texas.

Documented Limitations

The loss ratio view produces inflated ratios for some state-year combinations because the policy dataset is capped at 200,000 records per state by the API ingestion script, meaning premium totals do not represent full market exposure. The premium adequacy view shows numeric occupancy codes in some segments due to a dimension join gap between claims and policies data — claims do not carry a construction class field, so segments without policy matches show "No Policy Data." Both limitations are documented in the data quality notes.

These are honest constraints of working with redacted, capped public data — and the kind of data quality findings a real analytics team would surface and document in a production warehouse.

Why Flood Insurance

Flood risk is a first-order concern in Australian general insurance. The 2022 Eastern Australia floods triggered the largest insured loss event in the country's history, and the Australian Government established the ARPC Cyclone Reinsurance Pool the same year. NFIP is the closest publicly available analogue to Australian flood insurance data at transaction level — building a warehouse on it demonstrates competence in a domain every Australian insurer is actively investing in.

How It Connects

This warehouse is the engineering layer in a three-project insurance analytics stack. The Predictive Claims Liability Model consumes structured policy data to forecast pure premium using Tweedie regression across 677k policies. The Macroeconomic Resilience project stress-tests portfolio KPIs against ABS and RBA economic scenarios. Three projects, three layers of the same analytics stack — mirroring how data teams operate inside real insurers.

Technical Stack

Python (requests, pandas, pyodbc) for API ingestion and Bronze loading. T-SQL for all ETL logic, dimensional modelling, and analytics views. SQL Server (Azure SQL Edge via Docker) as the database engine. Draw.io for architecture and ERD diagrams. Git and GitHub for version control.

Key Numbers

1,703,977 claims ingested via REST API across 5 states. 1,000,000 policy records. 1,329,004 Gold-layer fact records after cleaning and deduplication. 823,465 policy facts with exposure-weighted metrics. 7 insurance KPI analytics views. 19,358-row date dimension spanning 1978 to 2030. 469 location dimension entries across 5 states.

Diagrams Section

Upload the following images to Sanity with these captions:

Image 1: Architecture diagram Caption: Data Architecture — OpenFEMA API to Gold Star Schema

Image 2: Star schema ERD Caption: Gold Layer Dimensional Model — 2 Fact Tables, 5 Dimensions

Image 3: ETL flow diagram Caption: ETL Pipeline — Bronze, Silver, Gold Transformation Steps

Image 4: Screenshot of vw_loss_ratio_by_state query results

Loss Ratio by State — Gold Layer Analytics View

Image 5: Screenshot of vw_portfolio_summary query results

Portfolio Summary — Year-over-Year Trends